At Comms Multilingual, we are very interested in the latest developments within the assessment and certification industries. To that end, we are asking industry thought leaders to provide guest contributions to our blog, which we hope will be of interest to our community.

Our first contributor is Dr. Joy Matthews-López. Dr. Matthews-López is a Senior Psychometrician at Professional Testing and an adjunct instructor of mathematics at Ohio University. She writes about test adaptation and localization from a psychometric perspective.

The primary role of psychometrics in the adaptation/localization process is to provide evidence that target and source forms are equivalent and that they yield meaningful and interpretable scores. For such evidence to be compelling, it is imperative to establish defensible arguments of reliability, validity and fairness.

Psychometrics routinely touches all aspects of testing, from job/task/content analysis through score reporting. However, when tests are adapted for use in different languages and/or cultures, then the role of psychometrics expands to address issues endemic to adapted instruments. For example, it is possible that the target construct(s) are different or fail to exist in the target population. In such cases, decentering the source constructs may be necessary. Another challenge might be seat time: should the time allowed to take the adapted exam be the same as the time allowed to take the source test? These are but two examples of the many psychometric challenges associated with adaptation/localization.

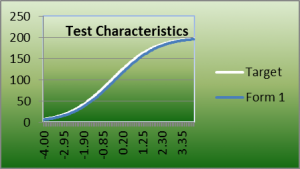

Establishing source-to-target equivalence is mission critical when adapting/localizing a measurement instrument. Both test forms and examinee populations must be considered. To check for population equivalence, one may compare demographic and score data between source and target groups. To establish equivalence between measurement instruments, content, psychometric, and construct equivalence must be established. Content equivalence can be established by verifying the alignment between the exam’s blueprint and the number of operational items included in the exams. Assessing psychometric equivalence between source and target forms may include comparing technical aspects of operational forms, such as item count per form, mean difficulty (classical and/or IRT), information at the cut (in the case of criterion-based exams), expected number correct at the cut, form-level reliability, exposure rates of the items on the forms, and fit in terms of test information and test characteristic curves.

Establishing construct equivalence may require the gathering of a wide variety of information, such as inter-scale correlations, goodness of fit indices, dimensionality studies, and plots of relevant data, such as person ability to item difficulty plots. In addition, reliability indices, such as KR-20 or coefficient alpha, separation indices (for Rasch-based programs) should be examined.

Psychometrics provides the technical framework needed to support the adaptation process. In my next post, I will provide some detailed examples of adapted items and show how translation and adaptation may differ.